In the first post of this series, we looked at how we can display a UIView in a SwiftUI hierarchy, as well as how we can change properties on said view. In the second post, we explored options for exposing this view’s delegate to our SwiftUI view, allowing us to provide both an object to act as delegate as well as a way to respond to the delegate directly in the SwiftUI view.

Our demo code is presently:

class ThirdPartyUIView: UIView {

var shouldAdd: Bool = true

var delegate: ThirdPartyViewDelegate?

func changeInternalValue() { }

}

struct ThirdPartyViewRepresentable: UIViewRepresentable {

var delegate: ThirdPartyViewDelegate? = nil

var shouldAdd: Bool

func makeUIView(context: Context) -> ThirdPartyUIView {

let view = ThirdPartyUIView()

view.shouldAdd = shouldAdd

view.delegate = delegate

return view

}

func updateUIView(_ uiView: ThirdPartyUIView, context: Context) {

uiView.shouldAdd = shouldAdd

}

}

struct ThirdPartyView: View {

var shouldAdd: Bool

@State private var coordinator = ThirdPartyCoordinator()

var body: some View {

ThirdPartyViewRepresentable(delegate: coordinator, shouldAdd: shouldAdd)

}

}

struct ThirdPartyDemoView: View {

@State private var shouldAdd = true

@State private var currentValue = 0

var body: some View {

Text("\(currentValue)")

ThirdPartyView(shouldAdd: shouldAdd)

.viewChangedValueTo { view, newValue in

currentValue = newValue

}

Toggle("Add?", isOn: $shouldAdd)

Button("Perform Action") {

}

}

}Code language: Swift (swift)

What does our Button‘s action need to be for us to trigger the ThirdPartyUIView.changeInternalValue() method?

There’s several ways we can solve this. One approach might be to have something trigger the action in the view representable’s updateUIView(_:context:) method. As noted in part one, updating the property on the UIViewRepresentable will trigger that method, so we just need to add properties for that to happen. So we could add a new @State boolean that is passed from our view down into the view representable, and when its value changes, we perform the action. However, this leaves us with a few oddities: To trigger the action, our button has to… toggle a Bool, which is not very obvious; Other developers might be confused as to what its purpose is. It also means that performing actions on the view requires changing state, which isn’t exactly why we have declarative UI frameworks like SwiftUI.

Instead of adding additional (and potentially confusing) state to our views to trigger actions, we really want our button to be able to just call the action that needs to be done. But that requires the action to be exposed in such a way that the Button can access it despite the action and its view living within another view. How can we expose that action in a way that the Button can trigger it? We’ll look at a few first party examples from SwiftUI. First, though, let’s look at how information is passed in SwiftUI.

Two-Way Communication

Normally we pass information from one view to the next by means of the view’s initializer. For instance, providing an initial value for shouldAdd in our example is part of the call site.

ThirdPartyView(shouldAdd: shouldAdd)Code language: Swift (swift)

What if we needed to pass information down through multiple layers of views? That’s where the environment comes into play.

There are two ways of passing information down through the environment.1 The first is by passing in an EnvironmentObject. Essentially, by providing a class object conforming to ObservableObject, you can then listen for said object in a sub-view.

class SomeObject: ObservableObject { }

let yourObject = SomeObject()

...

SomeSwiftUIView()

.environmentObject(yourObject)

struct SomeSwiftUIView: View {

@EnvironmentObject private var yourObject: SomeObject

}Code language: Swift (swift)

The second way is by means of EnvironmentValues. This struct is used anytime you pull information from the environment. Here are a few things you can get from the environment in SwiftUI.

@Environment(\.colorScheme) var colorScheme

@Environment(\.dynamicTypeSize) var typeSizeCode language: Swift (swift)

The Environment wrapper takes a key path to a property on EnvironmentValues. The property we create as a var inherits its type from the property the key path points to. In these examples, both colorScheme and dynamicTypeSize are just properties exposed on the EnvironmentValues struct.

So the environment is used to pass data down the hierarchy. How can we pass information up? That is by means of Preferences.

Whereas you use the environment to configure the subviews of a view, you use preferences to send configuration information from subviews toward their container. However, unlike configuration information that flows down a view hierarchy from one container to many subviews, a single container needs to reconcile potentially conflicting preferences flowing up from its many subviews.

Apple Documentation

To pass information via Preferences, one needs to create a struct conforming to PreferenceKey. This is a named value that is produced by the view. Part of the creation of the PreferenceKey is resolving multiple values for that key into a single value. That single value can then be used by a parent container view.

Now that we’ve checked out the ways we can communicate up and down a SwiftUI view hierarchy, there’s one more thing SwiftUI can teach us. Are there any examples of exposing underlying functionality to a parent container? The answer is actually yes.

Proxies

While the debate of UIKit versus SwiftUI continues among developers, many often forget one simple truth: SwiftUI is quite often just utilizing UIKit under the hood. This is why development techniques that manipulate underlying UIKit views are possible.

This truth, however, leads many to say that SwiftUI is lacking because things that are possible in UIKit are simply not exposed. This is true for elements where tasks might be trigger programmatically but are difficult to describe as a function of state. Let’s consider the ScrollView.

ScrollView {

Text("First").id(1)

Text("Second").id(2)

Text("Third").id(3)

}Code language: Swift (swift)

A ScrollView tells us to put its contents inside of, well, a scroll view. When we create it, we can tell it which axis to scroll on. However, UIScrollView in UIKit does more than that; it allows programmatic scrolling! How did Apple end up adding that functionality?

Introducing the ScrollViewReader.

ScrollViewReader { proxy in

ScrollView {

Text("First").id(1)

Text("Second").id(2)

Text("Third").id(3)

}

}Code language: JavaScript (javascript)

The sole purpose of the ScrollViewReader is to expose functionality of underlying scroll views, in this case the ability to scroll to a particular view. It does so by means of a proxy object. We pass that proxy the ID of the object we want to scroll to.

ScrollViewReader { proxy in

ScrollView {

Text("First").id(1)

Text("Second").id(2)

Text("Third").id(3)

Button("Scroll To Top") {

proxy.scrollTo(1)

}

}

}Code language: Swift (swift)

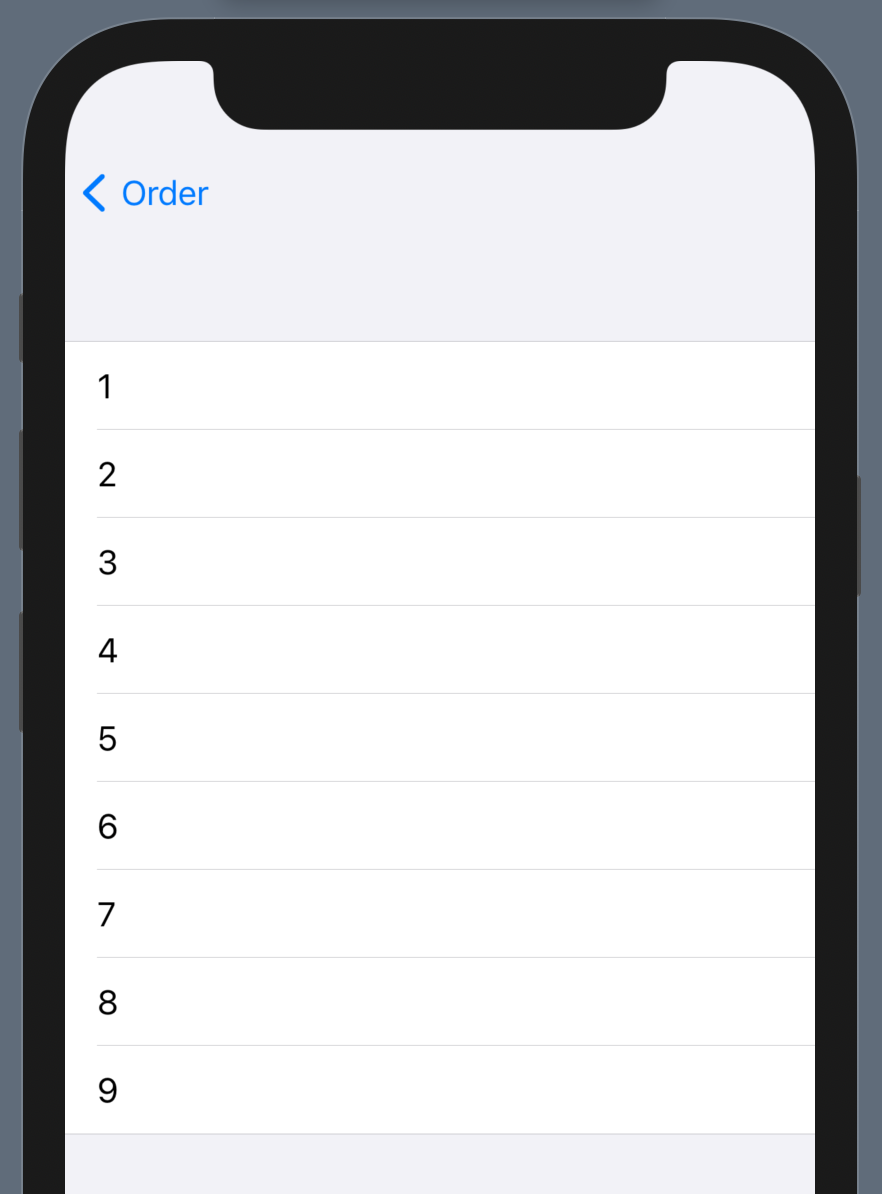

If we need to have programmatic control, we use the ScrollViewReader. If we just need a ScrollView without that, we can ignore it. Can we create something similar for our example? Could we end up with something like this?

ThirdPartyReader { proxy in

ThirdPartyView()

Button("Perform Action") {

proxy.changeInternalValue()

}

}Code language: JavaScript (javascript)

Creating a Proxy and a Reader

To achieve our goal, we need to create a few new objects. First, our proxy. This object’s purpose is to expose functionality of the ThirdPartyUIView without exposing the entire view itself. The design is fairly simple. 2

class ThirdPartyProxy {

fileprivate weak var view: ThirdPartyUIView?

func changeInternalValue() {

view?.changeInternalValue()

}

}Code language: Swift (swift)

Our proxy has a single method, changeInternalValue(). If there were other functions of ThirdPartyUIView that we’d want to expose, we would add them here.

Now, how will we expose the proxy? We’ll copy the above example and build our own “reader” object.

struct ThirdPartyReader<Content: View>: View {

private var content: (ThirdPartyProxy) -> Content

init(@ViewBuilder _ content: @escaping (ThirdPartyProxy) -> Content) {

self.content = content

}

var body: some View {

content(proxy)

}

}Code language: Swift (swift)

The ThirdPartyReader‘s purpose is to provide a proxy instance to our view content. It’s a very simple container! However, how will we get the proxy? And how will the proxy get the view?

Here, there are 2 approaches we could take: (1) We can pass the view or its actions up through Preferences to this container, or (2) pass an object into the Environment that captures what we need.

For now, we’ll go with option 2. Option 1 is definitely possible but has its own quirks. If you build a good example using it, I’d love to hear about it!

Getting the View for Our Proxy

We’re working down through the hierarchy in the Environment. Thus, we start by creating a proxy instance in our new reader.

struct ThirdPartyReader<Content: View>: View {

@StateObject private var proxy = ThirdPartyProxy()Code language: Swift (swift)

Note that we’re creating this as a StateObject. Our reader is thus owning the instance of this object. However, using this property wrapper requires our proxy conform to ObservableObject. We’ll do so as precedent as a proxy might benefit from having @Published properties depending on the view being wrapped.

With the proxy created and owned by this view, we now pass it into the Environment. However, we’re not just passing it using .environmentObject. Doing so would create a tight requirement for any child view, where we must provide a proxy even if we’re not in a reader.

Instead, we’ll make use of the EnvironmentValues.

extension EnvironmentValues {

private struct ThirdPartyProxyEnvironmentKey: EnvironmentKey {

static var defaultValue: ThirdPartyProxy? = nil

}

var thirdPartyProxy: ThirdPartyProxy? {

get { self[ThirdPartyProxyEnvironmentKey.self] }

set { self[ThirdPartyProxyEnvironmentKey.self] = newValue }

}

}Code language: Swift (swift)

We first create a new EnvironmentKey. It tells us the type to be passed into the environment which will be an optional ThirdPartyProxy. By default, it’ll be nil, which means no proxy exists in the environment.

We’ll use the above to pass our new proxy into the environment of the reader’s view.

var body: some View {

content(proxy)

.environment(\.thirdPartyProxy, proxy)

}Code language: Swift (swift)

Now, any view content provided to the reader container will find a proxy object in the environment. We can now check the environment in our ThirdPartyView and update our view representable to set the view on that proxy.

struct ThirdPartyViewRepresentable: UIViewRepresentable {

@State private var actionTriggered = false

var delegate: ThirdPartyViewDelegate? = nil

var proxy: ThirdPartyProxy?

var shouldAdd: Bool

func makeUIView(context: Context) -> ThirdPartyUIView {

let view = ThirdPartyUIView()

view.shouldAdd = shouldAdd

view.delegate = delegate

proxy?.view = view

return view

}

func updateUIView(_ uiView: ThirdPartyUIView, context: Context) {

uiView.shouldAdd = shouldAdd

}

}

Code language: Swift (swift)

struct ThirdPartyView: View {

@Environment(\.thirdPartyProxy) private var proxy

var shouldAdd: Bool

@State private var coordinator = ThirdPartyCoordinator()

var body: some View {

ThirdPartyViewRepresentable(delegate: coordinator, proxy: proxy, shouldAdd: shouldAdd)

}

}

Code language: Swift (swift)

With the above changes, we’ve added the last piece and have enabled our SwiftUI views to access underlying methods of our wrapped UIView. Our fully functional sample view now looks like this:

struct ThirdPartyDemoView: View {

@State private var shouldAdd = true

@State private var currentValue = 0

var body: some View {

ThirdPartyReader { proxy in

Text("\(currentValue)")

ThirdPartyView(shouldAdd: shouldAdd)

.viewChangedValueTo { view, newValue in

currentValue = newValue

}

Toggle("Add?", isOn: $shouldAdd)

Button("Perform Action") {

proxy.changeInternalValue()

}

}

}

}Code language: Swift (swift)

In these posts, we’ve looked at how we can fully use a UIView within a SwiftUI hierarchy. To do so, we’ve simply used what Apple has provided since SwiftUI was introduced with iOS 13. And while SwiftUI might be the future of building apps, it will likely continue to be built on top of UIKit, and UIKit likely has a long future ahead of it. Being able to work with UIKit in SwiftUI is just another tool in our ever growing toolbox as developers on Apple platforms.

The full sample code is available as a Github Gist.